We deploy Agent Orcha

into your environment.

From scoping to production in two weeks. On-premises, air-gapped, cloud, or hybrid. Your team operates it independently from day one.

Choose how we work together

Sprint deployment

Two-week engagement. We scope, configure, and deploy working AI workflows. Money-back guarantee if we don't deliver.

Managed platform (PaaS)

We handle infrastructure, updates, and monitoring. SLA-backed uptime. Start managed, move on-prem later.

Training & workshops

Structured training for developers, operators, and security teams tailored to your deployment.

Advisory & custom development

Bespoke agents, integrations, and workflow automation built to your exact specifications.

What ships with every deployment

Full platform. No feature gating.

Multi-agent workflows

YAML-defined agents with step-based and ReAct workflows. Retry logic, conditional branching, and human approval gates.

Unified model runtime

Text, image (FLUX.2), TTS (Qwen3), and embeddings through one GPU-managed cache. Auto hardware detection.

Knowledge stores

PostgreSQL, MySQL, SQLite, files, web APIs. Vector + graph retrieval. Auto-reindexing. No external vector DB.

Sandboxed execution

JS VMs, non-root shells, browser automation via CDP, scoped file ops. Every boundary containerized and logged.

Tool ecosystem

MCP protocol, custom JS functions, built-in tools for approval and memory. Knowledge search and SQL as agent tools.

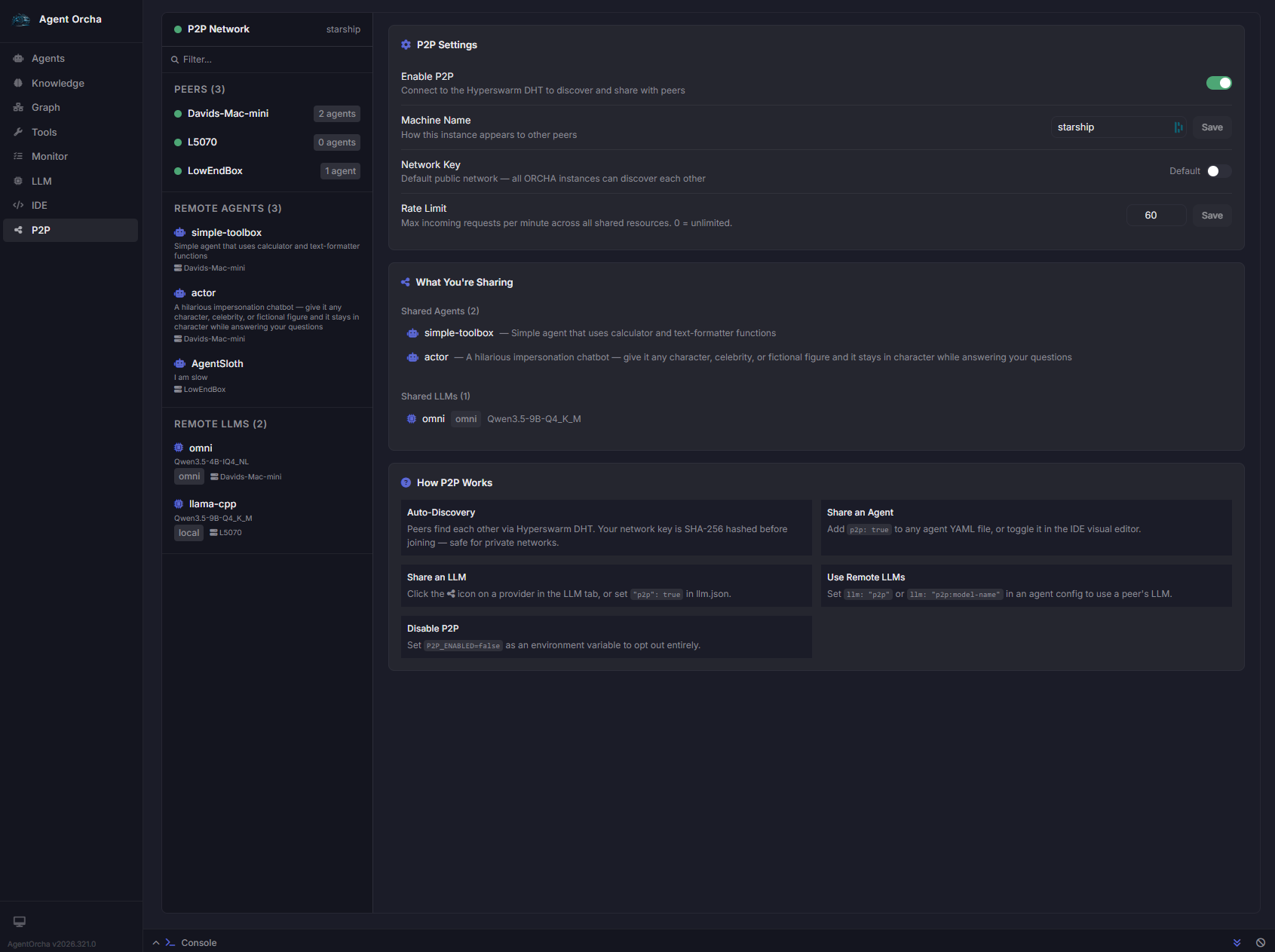

P2P agent mesh

Share agents and LLM capacity across nodes. Noise encryption. Credentialed private networks. No central server.

Deploy anywhere your data lives

P2P agent and model sharing

Hyperswarm-based networking lets nodes discover each other, share agent capacity, and pool LLM inference — all encrypted end-to-end with no central server.

- Credentialed private networks via shared key

- Share agents and remote LLMs across machines

- Per-peer rate limiting and catalog broadcast

- Decentralized — no single point of failure

On-premises

Entire stack inside your perimeter. No internet required. Validated in CJIS, HIPAA, and ITAR environments.

Cloud

Fastest path to production. Cloud LLM providers or bring your own GPU. Docker with docker-compose.

Hybrid

Sensitive data on-prem, non-sensitive in the cloud. Same YAML configs work in both environments.

Native desktop apps

macOS, Windows, Linux native binaries with system tray. No terminal required.

Hardened by design

Per-agent authentication

Independent token-based auth for every published agent. Scoped tool access per agent.

Sandboxed execution

Non-root containers, SSRF protection, symlink escape prevention, read-only SQL enforcement.

Encrypted P2P

End-to-end Noise encryption. Credentialed private networks. Per-peer rate limiting.

Full audit trail

Every action logged. Real-time monitoring. Version-controlled configs in git.

2-Week Money-Back Guarantee

We define success criteria together before the engagement starts. If we don't deliver a working AI workflow that meets those goals within two weeks, you get your money back. We take on the risk so you don't have to.

Schedule a Demo